A Two-Phase Deep Learning Model for Counterfeit Detection of Indian Banknotes using YOLO-NAS and UV Imaging for Visually Impaired People

1Dept. of Computer Science and Engineering, Maharishi Markandeshwar (Deemed to be University) M.M Engineering College, Mullana, 133203, Ambala, India

2Dept. of Computer Science and Engineering, Maharishi Markandeshwar (Deemed to be University) M.M Engineering College, India

*Author to whom correspondence should be addressed:

E-mail: payal49691@gmail.com (PC)

E-mail: payal49691@gmail.com (PC)

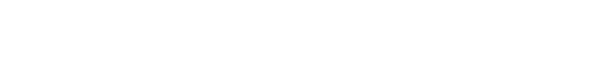

Received: March 10, 2025 | Revised: June 10, 2025 | Accepted: July 16, 2025 | Published: September 2025

Abstract

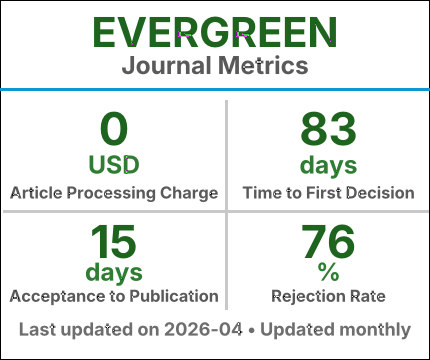

Counterfeit currency creates a significant financial and Security threat and often mimics genuine notes so precisely that the human eye struggles to discern the differences. This issue becomes even worse for the visually impaired, who experience difficulties in differentiating between authentic and counterfeit banknotes. To overcome this problem, a new two-phase approach is proposed that uses the You Only Look Once- Neural Architecture System (YOLO-NAS) to detect and verify Indian rupee notes under ultraviolet (UV) light. This model comprises two phases: In the first phase, observable and invisible characteristics of a currency note are identified, whereas the second phase authenticates it based on advanced security features that are exclusively detectable under UV light. The model's performance is evaluated on two distinct datasets: the Indian and Thai banknotes dataset and the self-designed Dataset. The first experiment was conducted on the Indian and Thai banknote datasets and achieved an accuracy of 85.92%. Then, another experiment was performed on a self-created dataset, where the accuracy improved to 91.02%. Furthermore, an audio-based output system is integrated to help visually impaired individuals recognize and verify banknotes. Experimental results indicate that the suggested method improves counterfeit detection, making it optimal for practical use.

Keywords

Deep Learning ; YOLO-NAS ; Counterfeit Currency Detection ; Ultraviolet Imaging ; Visually Impaired Assistance

Available Repositories

Share Article

Article Metrics

--

Views

--

Downloads

--

Citations

Export Citation

Full Text